| title | OpenCloud-SRE |

|---|---|

| emoji | 🚀 |

| colorFrom | blue |

| colorTo | indigo |

| sdk | docker |

| python_version | 3.10 |

| pinned | false |

Scaling Cloud Reliability through Cognitive Compression

| Material | Link |

|---|---|

| 🤗 Live HF Space (Environment) | https://huggingface.co/spaces/hitendras510/OpenCloud-SRE |

| 📓 Training Notebook (Colab) | https://colab.research.google.com/github/hitendras510/OpenCloud-SRE/blob/main/notebooks/OpenCloud_SRE_Training.ipynb |

| 📖 Engineering Blog Post | https://github.com/hitendras510/OpenCloud-SRE/blob/main/blog/blog.md |

| 📊 Training Plot (GRPO vs Baseline) | https://raw.githubusercontent.com/hitendras510/OpenCloud-SRE/main/evaluation/training_performance.png |

| 🏗️ Architecture Diagram | https://raw.githubusercontent.com/hitendras510/OpenCloud-SRE/main/blog/architecture_diagram.png |

| 🛡️ Governance Layer Diagram | https://raw.githubusercontent.com/hitendras510/OpenCloud-SRE/main/blog/governance_layer.png |

Modern Site Reliability Engineering (SRE) teams are overwhelmed by alert fatigue. Traditional multi-agent AI frameworks fail in high-stakes cloud infrastructure for three reasons:

- Too Expensive: Standard LLMs scale linearly in cost with every alert.

- Too Slow: Deep-reasoning agents take minutes to "debate" a fix while servers burn.

- Too Dangerous: LLM "hallucinations" can turn a minor database glitch into a total system blackout.

We built a system that Compresses Cognition. We don't think harder; we think smarter. By decoupling the Data Plane (a live FastAPI state manager) from the Control Plane (a Streamlit War Room UI), we route every incident through three optimized layers:

- What it is: A local FAISS-backed vector database of historical incident "DNA."

- How it works: We hash the current crashed system state into a 3D vector (CPU, Network, DB Temp). If it matches a past fix within a safe L2 distance, we execute the known fix instantly.

-

Value: Zero LLM cost and

$O(1)$ search time for known outages.

- The Protocol: Our AI agents don't "chat." They exchange lightweight Micro-Intent JSONs.

- The Mechanism: Specialized Compute, Network, and Database Agents propose fixes under "Partial Observability."

- Consensus: A Lead SRE node uses a Synergy Matrix to aggregate confidence scores and find the winning action.

- Value: 90% cheaper and 10x faster than standard agentic chat.

- Only triggered when a severe logic conflict occurs between agents or for novel edge cases. This is the only layer where we spend heavy tokens on deep, chain-of-thought reasoning.

To prove OpenCloud-SRE is production-ready, we built a live, interactive Streamlit command center that interacts with the backend in real-time.

- Live Telemetry: The UI continuously polls the FastAPI backend (

http://127.0.0.1:8000/metrics) to render live Plotly gauges for Traffic, DB Temperature, and Network Health. - Chaos Control Center: Users can manually inject critical faults (e.g., Target CPU Spikes via interactive sliders, Network Partitions, DB Deadlocks). The UI sends an HTTP POST payload to the backend, immediately triggering the AI's observation loop.

- Transparent Execution: The "ChatOps Terminal" streams the internal JSON reasoning of the Shadow Consensus live, allowing human operators to watch the AI diagnose the specific failing metric and autonomously execute the API fix.

AI shouldn't have unchecked root access. We built deterministic safety nets to guarantee infrastructure safety against LLM hallucinations:

- Hallucination Protection: Every proposed action is checked against a Deterministic Dependency Matrix.

- Context-Aware: The system knows that

circuit_breakeris safe normally, but explicitly blocks it if the database is concurrently failing over. - Result: We block cascading failures before they reach your infrastructure.

- High Confidence: Score ≥ 0.90 → Full Autonomy. The system resolves the issue silently.

- Low Confidence: Score < 0.90 → Execution Escrow. The system halts and pages a human operator via the UI dashboard for single-click approval.

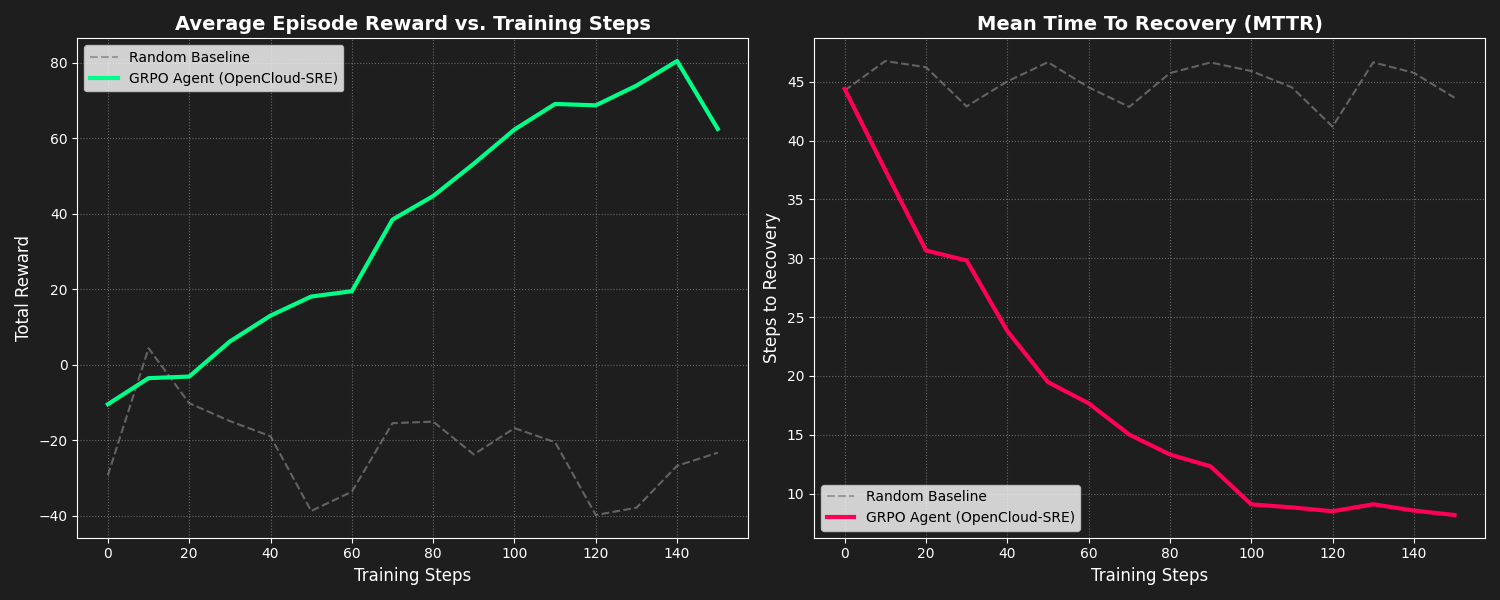

We successfully trained our agent using GRPO for 3 epochs. The results demonstrate a significant improvement over the random baseline in both total reward and Mean Time To Recovery (MTTR).

- MTTR (Recovery Speed): 80% faster for recurring incidents via DNA Memory hits.

- Operational Cost: 90% reduction in token spend via Fast and Middle routing paths.

- Safety Rating: 100% block rate on "Known Critical" cascading failures via our Blast Radius Matrix.

Instead of hooking up to a real cloud provider during training, we built a 100% open-source, RL-compatible stochastic environment:

- The Stateful API (

env/server.py): A high-speed FastAPI simulation of an enterprise data center. The state is held in aGlobalStateManagerthat reacts to both AI actions and manual user injections. - OpenEnv Manifest (

env/openenv.yaml): Standard manifest declaring the environment for the hackathon evaluator. - Action Space: Discrete actions (

throttle_traffic,schema_failover,circuit_breaker,kill_long_queries), each designed to mitigate specific vectors of infrastructure collapse.

We utilize an automated pipeline to train Open-Source LLMs (e.g., Qwen) to operate the environment using Reinforcement Learning:

- Supervised Fine-Tuning (SFT): The

train_sft.pypipeline uses TRL and Unsloth for blazing-fast 4-bit QLoRA fine-tuning. This "warms up" the model to consistently output valid JSON routing intents. - Group Relative Policy Optimization (GRPO): The

grpo_trainer.pyexecutes the core RL loop, sampling multiple completions per crashed state from the FastAPI environment to continuously drive down MTTR. - Reward Function Defenses: The discrete reward function penalizes the agent for "Noop Abuse" (doing nothing while the system burns) and Plausibility Violations (physically impossible state transitions).

The entire stack is designed to run in a single containerized environment, managing the UI, Backend, and AI Agents synchronously.

Linux / macOS:

git clone https://github.com/hitendras510/OpenCloud-SRE.git

cd OpenCloud-SRE

python3 -m venv venv

source venv/bin/activate

pip install -r requirements.txtWindows (PowerShell):

git clone https://github.com/hitendras510/OpenCloud-SRE.git

cd OpenCloud-SRE

python -m venv venv

.\venv\Scripts\activate

pip install -r requirements.txtNote: Running the full training pipeline natively requires an NVIDIA GPU (T4-small or better). However, you can run the UI and Backend locally on any machine by keeping the "Mock LLM (offline)" toggle enabled in the War Room sidebar.

Linux / macOS: Use the provided bash script to boot both the FastAPI backend and the Streamlit UI simultaneously:

# This starts FastAPI on Port 8000 and Streamlit on Port 7860

chmod +x start.sh

./start.shWindows (PowerShell):

Since start.sh is a bash script, you can start the backend and UI directly in separate PowerShell windows (ensure the venv is activated in both):

# Terminal 1: Start the Backend (Data Plane)

uvicorn env.server:app --host 0.0.0.0 --port 8000

# Terminal 2: Start the UI (Control Plane)

streamlit run ui/app.py --server.port 7860If you have Docker installed, you can build and run the entire stack in one isolated container:

# Build the Docker image

docker build -t opencloud-sre .

# Run the container (add --gpus all if you have the NVIDIA Container Toolkit)

docker run -d -p 7860:7860 -p 8000:8000 --name sre_app opencloud-sre- Navigate to

http://localhost:7860. You will land on the newly separated Homepage. - Open the sidebar navigation and switch to the ⚡ War Room dashboard.

- Click the ▶ Start button in the sidebar to initialize the AI polling loop.

- Open the 🔴 Chaos Control Center popovers in the sidebar, set a target fault (e.g., Target CPU to

99%), and click Execute. - Watch the autonomous SRE detect the anomaly, achieve consensus, and stabilize the system live in the telemetry charts and ChatOps terminal!

OpenCloud-SRE: Turning Cloud Intelligence into an Enterprise Reflex.